Generative AI for Business - Update

Update - I have published four additional articles documenting our strategy to enable context injection (embeddings) at scale, a progress update regarding our findings an overview of our latest enhacements and an end of year summary.

Last month, my team launched a Generative AI Chatbot for business use, designed to accelerate productivity by streamlining common tasks.

It can be compared to ChatGPT, leveraging two large language models (specifically OpenAI GPT-3.5 and Google PaLM2), making it capable of understanding and generating human-like responses to text-based inputs.

The key difference from ChatGPT (and equivalent services) is that our Generative AI Chatbot is private, with specific business features and controls to ensure security, privacy, legal, and compliance.

Initially, we launched the Generative AI Chatbot to 100 early adopters, targetting a wide range of common business tasks. For example:

- Answers (Information, Knowledge Articles, Guides, etc.)

- Ideation (Brainstorming, Storyboarding, etc.)

- Content Creation (Documents, Policies, Processes, Communications, etc.)

- Content Review (Literature, Journals, Papers, Press Releases, etc.)

- Summarisation (Executive Summaries, Key Messages/Findings, Reports, etc.)

- Research Analysis (Qualitative, Observations, Deductive Reasoning, Survey, etc.)

- Software/Data Engineering (100+ Programming/Scripting Languages)

- Translations (85+ Languages)

I am pleased to report the early adopter phase was a huge success, which saw viral growth above the original 100-user scope. One user commented “this Generative AI Chatbot has given me 50% more productivity in my day”, which directly speaks to the value we had hoped to unlock.

The early adopter phase also provided valuable feedback, resulting in six additional releases during the first month, delivering user experience enhancements and response tuning. We also monitored the usage patterns, further informing our policies and processes regarding the use of Generative AI services.

As a result, following discussions with the relevant internal stakeholders, we agreed to open the service to all employees (approximately 14,000).

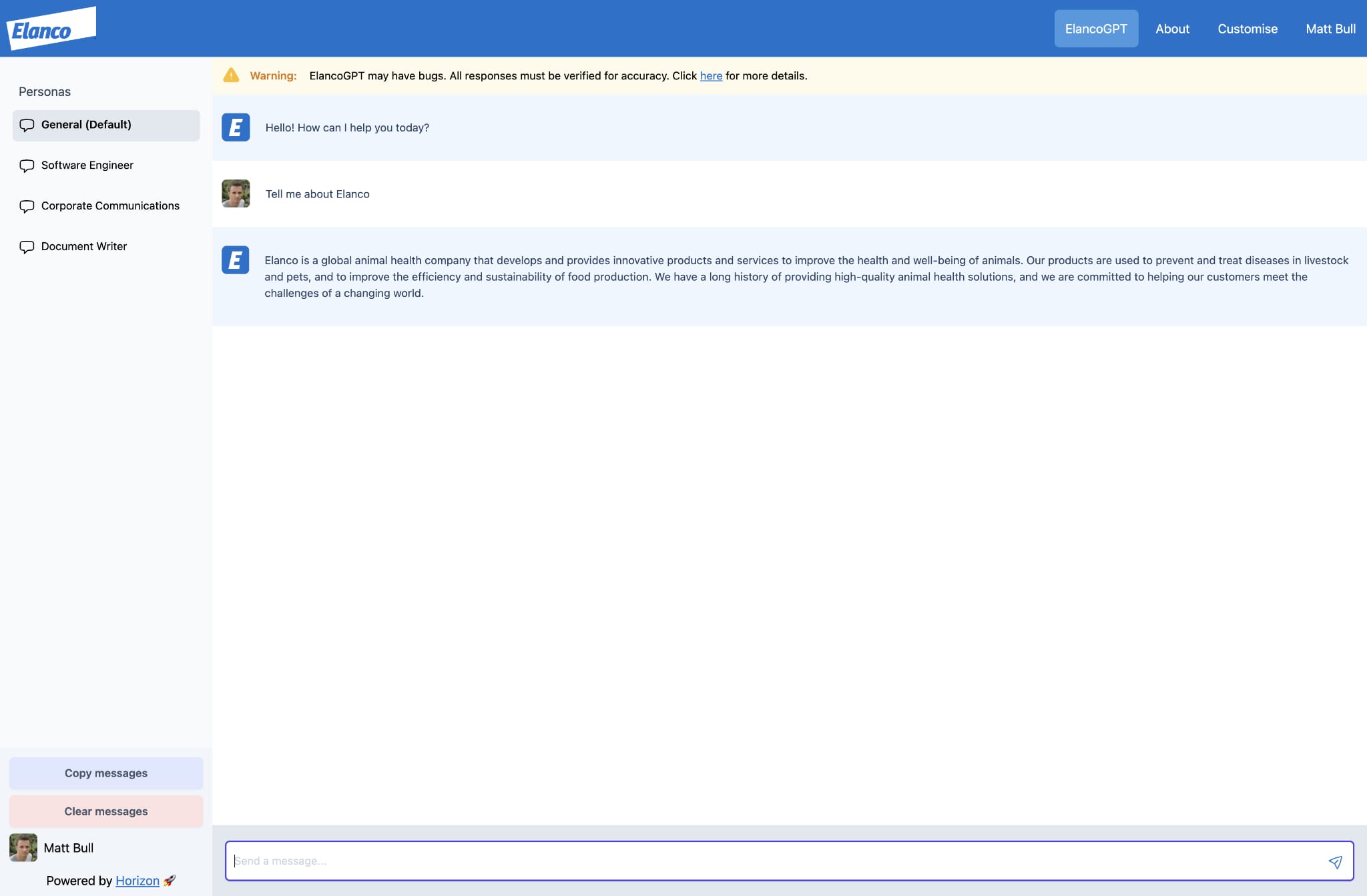

This included a new user interface (screenshot below), with a focus on custom personas.

The custom personas incorporate prompt engineering techniques that were tuned during the early adopter phase. Each persona targets a specific outcome, including rules that define the task, format and tone, resulting in a more accurate and appropriate response.

We also enhanced the ability for users to create their custom personas, providing the rules and any context as input, resulting in a unique URL that can be used and/or shared with colleagues. This feature has been used to prime the Generative AI Chatbot with business-specific context, as well as a “quick and dirty” way to test the model against specific data sets (e.g., Knowledge Articles, Processes, Journals, etc.)

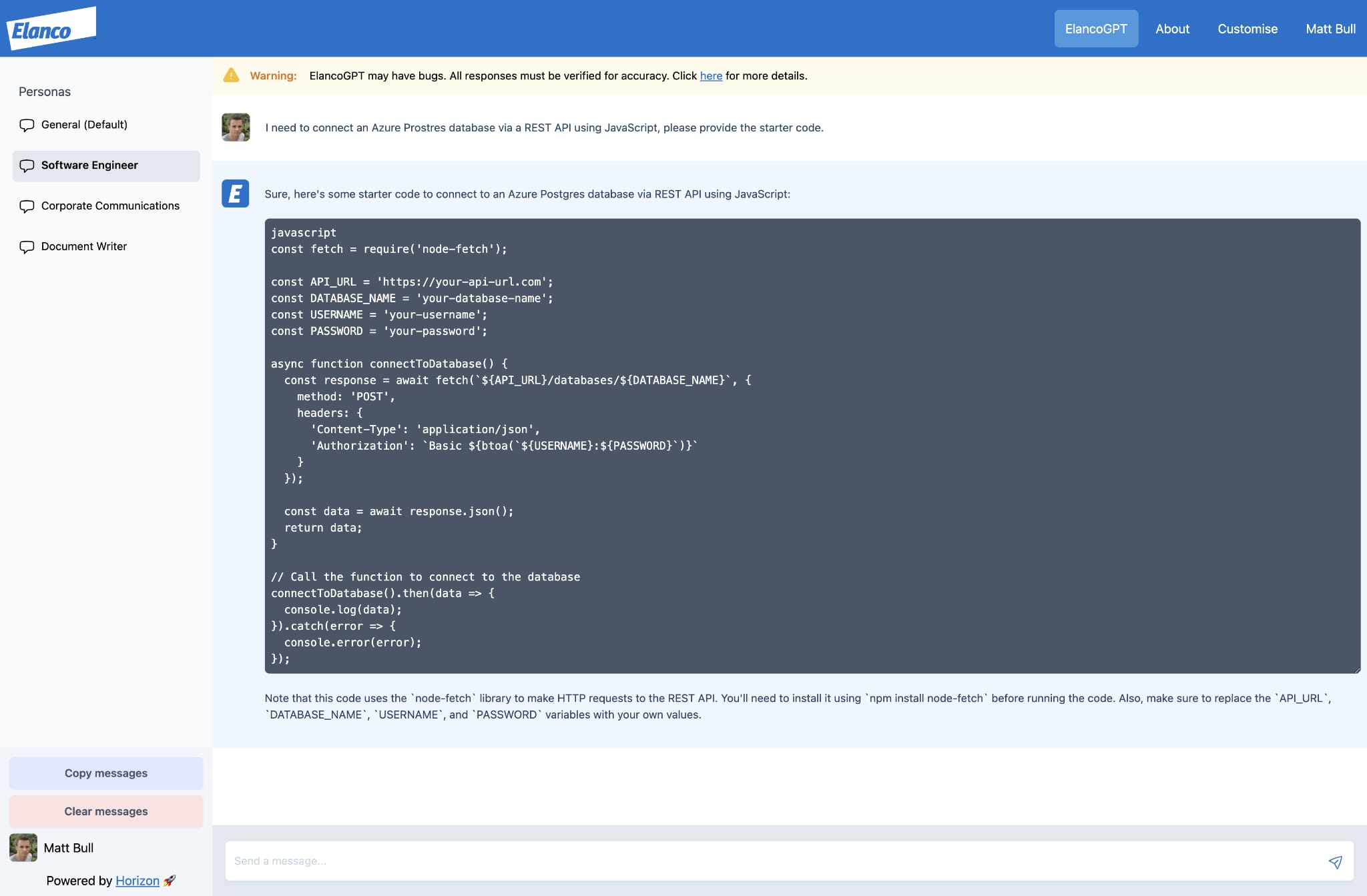

For example, the screenshot below highlights the “Software Engineer” persona being used to support common development tasks, which places a focus on producing readable code snippets, incorporating any relevant business context.

In the first business day, 450+ unique users accessed the Generative AI Chatbot, with thousands of prompts being submitted. Thanks to our decoupled architecture (outlined in the previous article) we did not see any scale issues, with the total (all users) cost remaining very low (< $50 per month).

It should be noted, by default, we prohibit the use of public Generative AI services (we are testing GitHub Copilot), which likely influenced the strong adoption.

Our next goal is to unlock business data/context, ingested from multiple data sources. This is a complex problem as data integrity becomes paramount, ensuring the responses accurately reflect the business data/context. As a result, although we have an approach working, we have not yet released this capability to production.

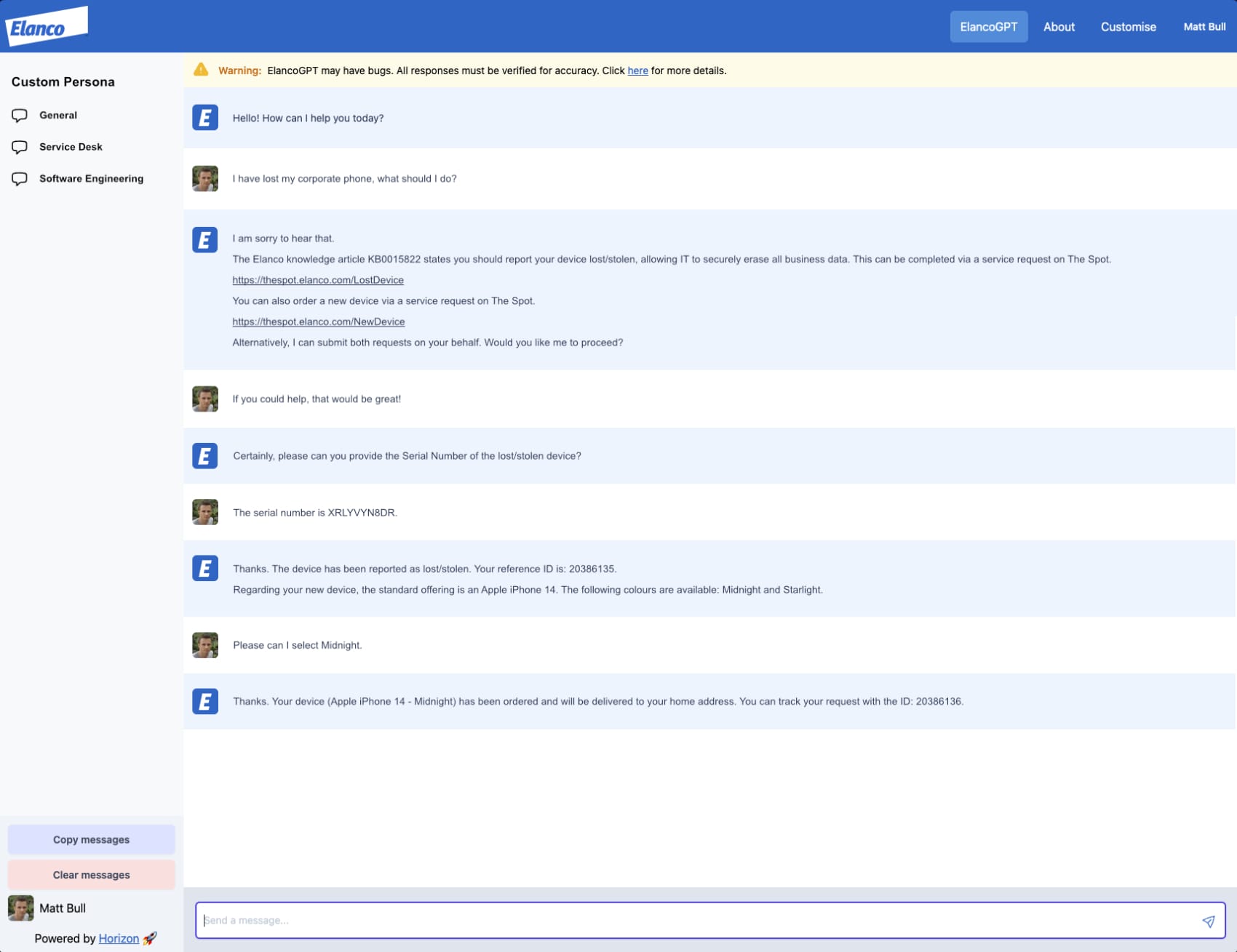

The screenshot below highlights our target state, where the Generative AI Chatbot can interpret business data. For example, in the screenshot, a knowledge article is used as the reference, resulting in a natural language interaction that triggers downstream automation.

Like many enterprise businesses, we have massive datasets (structured and unstructured) that can be a challenge to discover and/or consume, impacting speed to value. We hope that by exposing this data via natural language will improve these pain points, whilst also unlocking additional insights to a broader (non-technical) audience.

In conclusion, many technologies are hyped as “the next big thing”. However, I believe that the current hyper-innovation we are seeing with Generative AI, backed by this massive market investment and momentum, has a real chance of being a global disruptor.

Through my peer connections, I believe we are one of the first enterprise businesses to release a Generative AI Chatbot to all users, including business-specific features and security controls.

As to what the future holds, I am unclear. What is clear, businesses that invest wisely and move with agility will have a tremendous competitive advantage.