Generative AI for Business

Update - I have published five additional articles documenting the go-live of our Generative AI Chatbot for Business, our strategy to enable context injection (embeddings) at scale, a progress update regarding our findings an overview of our latest enhacements and an end of year summary.

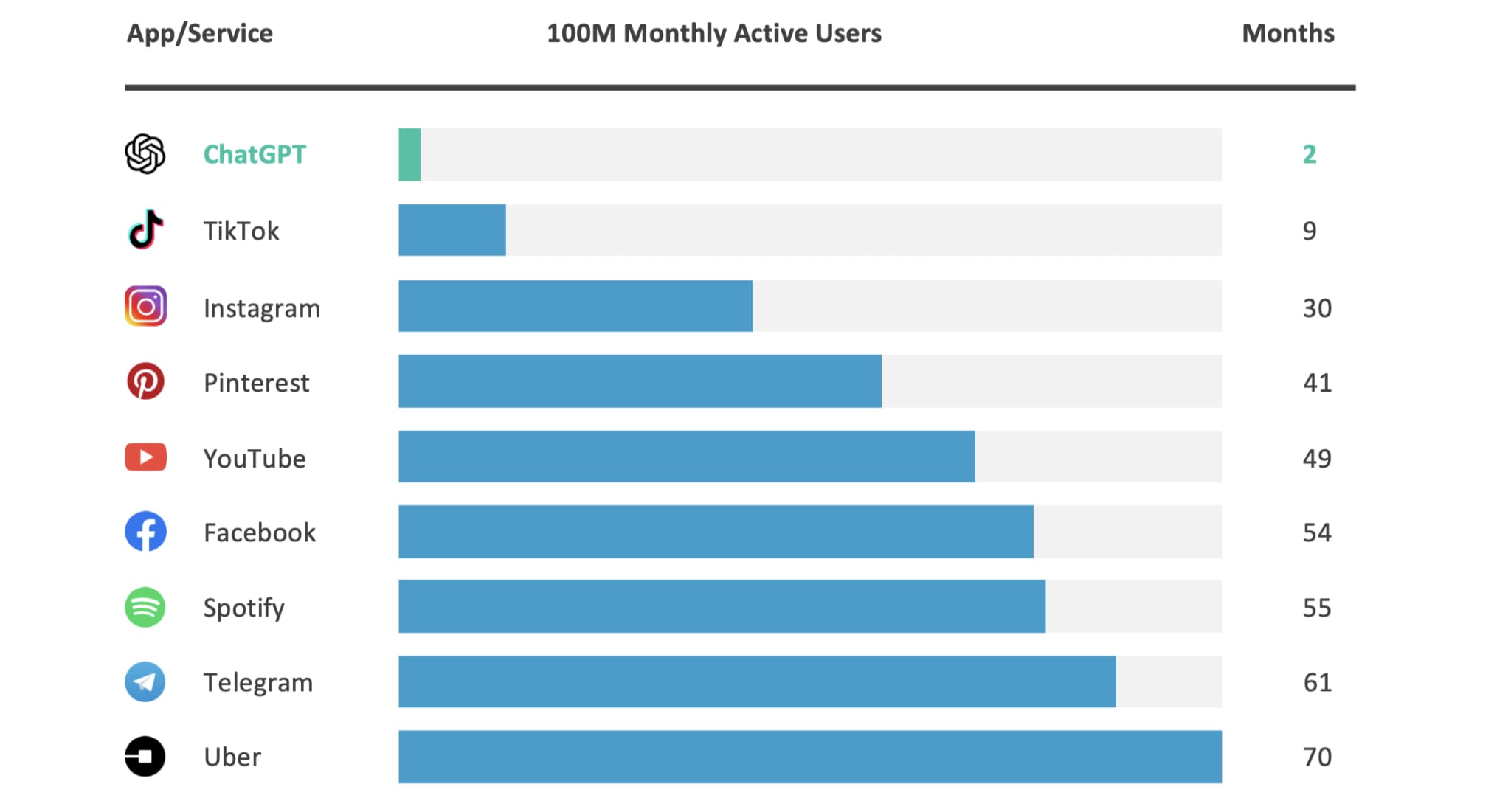

Since the launch of ChatGPT in November 2022, Artificial Intelligence (AI) has officially hit the mainstream, unlocking new opportunities and risks.

ChatGPT achieved 100 million active users in just two months. This unprecedented growth convinced Microsoft to reprioritise its business strategy, resulting in a rumoured $10B investment in OpenAI and the announcement of portfolio-wide AI capabilities (e.g., 30+ Copilot products in development).

Since February 2023, there has been an onslaught of AI announcements, with every technology company on earth desperately attempting to convince their customer base that they are “leaders in AI”. Specifically, Generative AI, which is a branch of AI that involves the development of models capable of producing novel and realistic content based on patterns and examples learned from training data.

As a CTO, I have been inundated with messages and meeting requests to discuss Generative AI, with imminent announcements expected from Salesforce.com, ServiceNow, Workday, SAP, and many others.

As a recent example, Google mentioned “AI” 143 times during the two-hour keynote presentation at Google I/O, which is approximately 1.15 times per minute. This should not be a surprise, as Google are arguably the company with the most to lose if Generative AI were to disrupt traditional web usage (specifically web advertisement).

In reality, many of these announcements are companies attempting to capitalise on the AI hype cycle (fear of missing out) and therefore should be treated with caution. For example, if a company is positioning their capabilities as “Private Preview” it is reasonable to assume they are in early development vs. “leaders” in this space.

With that said, as highlighted in my previous article, I do see tremendous personal and business potential regarding the use of Generative AI.

As a result, I have a small development team experimenting with these capabilities for business use, prioritising Microsoft (backed by OpenAI), Google and the open-source community.

In my opinion, Microsoft and Google are the most credible technology companies offering business-specific capabilities for Generative AI (Meta is also doing some interesting work). The primary differentiator is the availability of tools, example projects, and architecture materials to support development, including the relevant enterprise business controls (e.g., security, privacy, compliance, etc.)

However, it is arguably the open-source community that is demonstrating the highest rate of innovation with interesting projects such as Stanford Alpaca, Koala, GPT-J, Hugging Face, etc.

The goal of the development team is to build and learn, helping to mature our understanding and shape our thinking regarding the use of Generative AI.

As a result, we have built a business-specific Generative AI Chatbot that is the equivalent of ChatGPT, providing the same ability to answer queries, create and/or review content, generate reports and summaries, accelerate research activities, translations, and much more.

At this time, we have been using two large language models, GPT-3.5 (specifically gpt-3.5-turbo) and PaLM2 (which powers Google Bard), integrated into a business-centric web application where all prompts and responses are private (no interaction with a public interface).

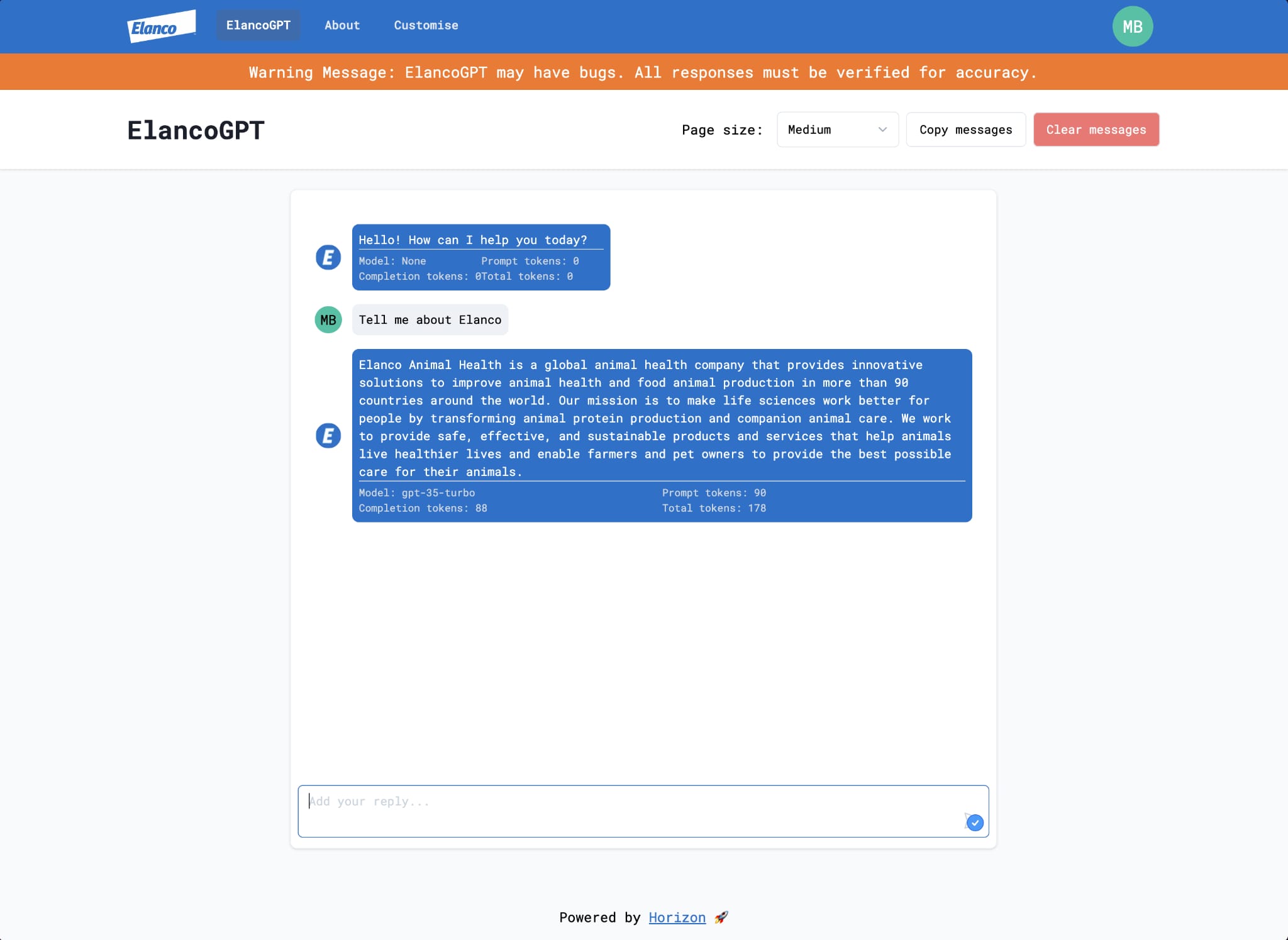

The screenshot below highlights the user interface used to interact with the Generative AI Chatbot. As you can see, it is fairly intuitive and familiar to anyone that has used ChatGPT (or an equivalent Generative AI service).

Regarding features, we have integrated our company security standards, specifically modern authentication (SSO/MFA), alongside individual session management, allowing users to retain context across multiple sessions. These features ensure all prompts, responses, data and use cases are private, adhering to our company’s privacy, legal and compliance policies.

We have also developed a specific capability to declaratively customise the initial prompt (prompt engineering), resulting in a new URL using the same underlying application architecture.

Prompt engineering is a technique that involves designing and optimising prompts to improve the performance of a large language model. Prompts are short pieces of text that are used to guide the model’s output, specifying the task to perform, as well as the desired output format, and any other relevant information/constraints.

Our default prompt stipulates that the Generative AI Chatbot is a business assistant and should attempt to respond professionally, using all available data sources. The prompt engineering feature allows for a new custom prompt to be defined (replacing the default), resulting in a new URL that can be used for more specific, tightly defined tasks.

The custom prompt can be used to target a specific persona. For example, Service Desk (Help Desk), Corporate Communications, Investor Relations, Marketing, Paralegal, Software/Data Engineering, etc. This approach helps improve the accuracy of the responses, whilst ensuring they are consistent with the expectations of the specific persona.

Finally, and maybe most excitingly from a business perspective, we have unlocked business data. This allows the Generative AI Chatbot to respond with business context, covering policies, processes, knowledge articles, journals, papers, etc.

The ability to ingest business data was a little more complex and required the use of some additional cloud components, specifically search (Google Enterprise Search or Azure Cognitive Search) to facilitate the indexing and retrieval of information from internal data repositories.

With the business data indexed, custom middleware extracts the keywords from the user prompt and matches the output (best effort) with the indexed data. The corresponding sentence (or sentences) from the indexed data are provided as part of the response using natural language, including a link (citation) to the relevant data sources.

Behind the scenes, this approach uses a technique known as “prompt stuffing”, where the matched sentences from the indexed data are added (stuffed) into the prompt for the large language model to use as context when responding. This is a fairly brittle approach (something I expect to improve over time), with limitations regarding the token count.

The accuracy of the response can vary depending on the quality of the user prompt and the precision of the matched data. However, we have found the accuracy can be improved when combined with the previously mentioned prompt customisation feature. For example, by stating the Generative AI Chatbot is a support agent and should only respond using a specific internal data repository (e.g., knowledge articles), instead of all known data sources.

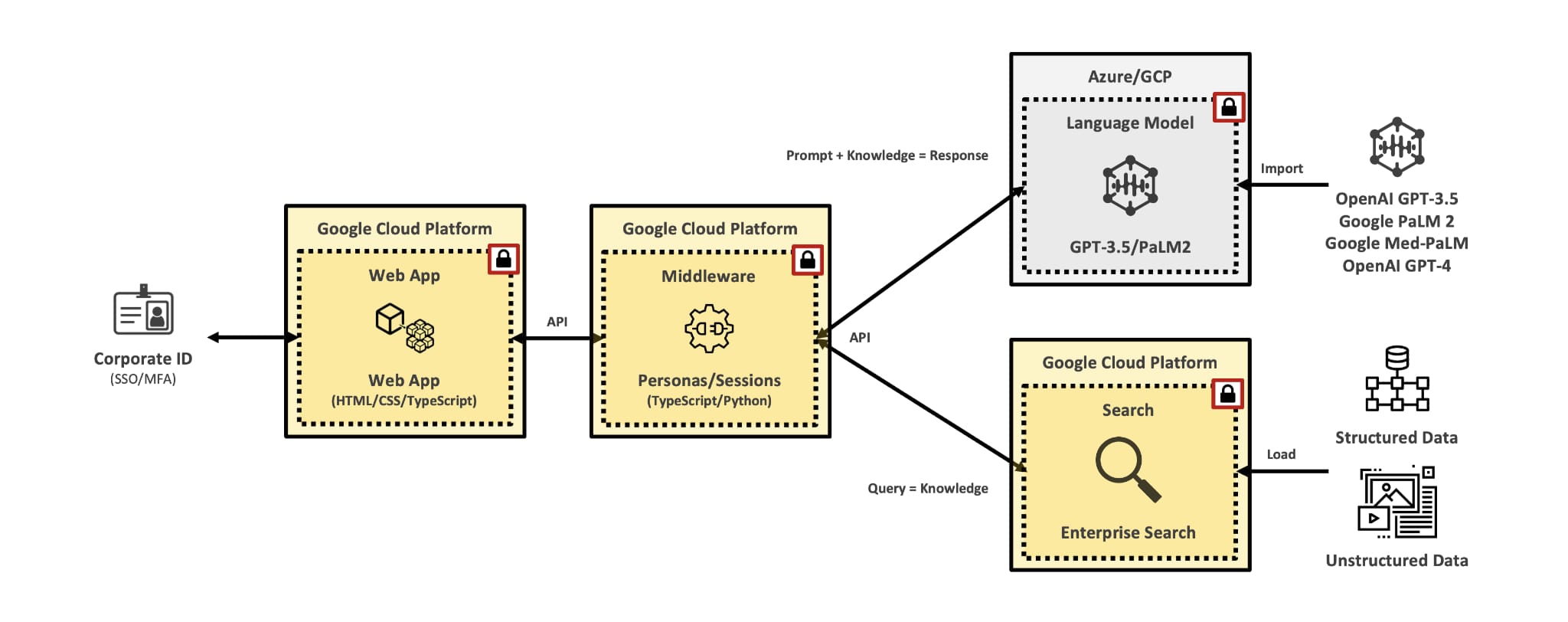

The “marchitecture” diagram below highlights the design we have implemented.

The diagram shows a hybrid architecture with the front-end and middleware running on Google Cloud Platform (GCP), connected to Azure OpenAI Service and Google PaLM2 for the large language model. Google Enterprise Search is used to index the the data. It is certainly possible to build this architecture end-to-end on Microsoft Azure or Google Cloud Platform (GCP). However, we purposely decided to target a loosely coupled architecture to provide maximum flexibility as we test and learn. For example, we have multiple large language models available, specifically GPT-3.5 and PaLM2, with the plan to add GPT-4 soon.

At this time, we have opened our Generative AI Chatbot to 100 early adopters, with the plan to expand to 500 in June. This includes a wide range of personas, covering Manufacturing, Research & Development, Commercial, Corporate Communications, Finance, Legal, IT, etc.

The initial feedback has been very positive, with use cases covering:

- Answers (Information, Knowledge Articles, Guides, etc.)

- Ideation (Brainstorming, Storyboarding, etc.)

- Content Creation (Documents, Policies, Processes, Communications, etc.)

- Content Review (Literature, Journals, Papers, Press Releases, etc.)

- Summarisation (Executive Summaries, Key Messages/Findings, Reports, etc.)

- Research Analysis (Qualitative, Observations, Deductive Reasoning, Survey, etc.)

- Software/Data Engineering (100+ Programming/Scripting Languages)

- Translations (85+ Languages)

Our Generative AI Chatbot is positioned as a productivity accelerator, not a replacement for human intelligence. Therefore, the individual providing the input and receiving the response is accountable for the accuracy (verification).

Do we expect our Generative AI Chatbot to scale further? Maybe… The market is evolving quickly and we will adapt accordingly. For example, we fully expect to see this architecture pattern “bundled” as a service offering from specific vendors. However, it creates questions about cost (assuming everyone will charge a premium for Generative AI capabilities) and the user experience (multiple discrete Generative AI Chatbots).

Ultimately, this project is an experiment (learning experience) for IT and the business, whilst also an opportunity to drive immediate value through enhanced productivity and cost savings.

Finally, it is worth mentioning that our Generative AI Chatbot was built by two developers (Calum Bell and Charlie Hamerston-Budgen), over ten business days (not dedicated to this project), with sponsorship/consultancy from myself and our friends at Microsoft/Google.