Automation

This article is part of a series. I would recommend reading the articles in order, starting with “Modern IT Ecosystem”, which provides the required framing.

As a brief reminder, this series aims to explore the “art of the possible” if an enterprise business could hypothetically rebuild IT from the ground up, creating a modern IT ecosystem.

In the article “Hybrid Multi-Cloud”, I shared my application/data hosting strategy, covering SaaS, Public Cloud, Colocation Data Centres and Edge Computing. This strategy emphasises modern application architecture but offers enough flexibility to support almost any workload.

To unlock the value of this strategy, automation becomes a necessity, helping to streamline development and operations, improve the user experience, as well as guarantee security, quality and privacy.

This article will highlight my proposed automation architecture, describing my philosophy, key technology decisions and positioning.

Introduction

Historically, when dealing with legacy technologies, the effort to enable automation would often outweigh the value. Thankfully, due to advancements in Cloud, API-Centric Architecture and Software-Defined Infrastructure, automation has not only become simpler but also a prerequisite when looking to unlock the value of a modern IT ecosystem. This is highlighted by the value proposition outlined below.

-

Improved Speed: Reduce the reliance on manual intervention, streamlining the IT processes from ideation to production.

-

Improved Agility: Ability to deliver continuous improvements, enhancements and issue resolution.

-

Improved Autonomy: Self-service for IT provisioning, supporting multiple personas (e.g. Product Team, Engineering Team, etc.)

-

Improved Flexibility: Ability to complete real-time, ad-hoc releases across the entire IT ecosystem (e.g. Cloud, Colocation DC, Edge Computing, etc.)

-

Enforced Best Practices: Enforce consistent solution design and developer best practices (e.g. Style Guides, etc.)

-

Improved Information Security: Centralised governance, including role-based access and monitoring. Proactively mandated and enforce security controls (e.g. Linter, Source Code Analysis, Package Analysis, etc.)

-

Improved Quality: Proactive service discovery and CMDB Configuration Item creation. Proactively mandated and enforce quality controls, including testing (e.g. Application Scaffolding, Application Manifest, Unity Testing, UI Testing, etc.)

-

Improved Privacy: Mandated and enforce privacy controls, including data encryption, obfuscation, residency, high-availability and backup.

-

Cost Optimisation: Removal of duplicate technologies (technical debt) to support IT provisioning, governance budgeting, discovery and change control. Removal of time-consuming manual tasks and associated resources.

To reinforce my position regarding automation, I have embedded key automation concepts and techniques as part of my IT principles and IT decelerations. For example, the three statements below provide a clear direction regarding the desired approach to architecture.

-

Server Deployments: By default, new IaaS deployments must target immutable infrastructure, including ephemeral (short-lived) and stateless (do not persist a session) servers.

-

Software-Defined: By default, all cloud services (SaaS, Public Cloud), Colocation Data Centre and Edge Computing must be fully automated, leveraging Infrastructure-as-Code and Software-Defined techniques.

-

Automated: By default, new application deployments must be automated, leveraging build tools and/or automation services.

A full description of my IT principles and IT decelerations can be found in the article Modern IT Ecosystem.

Automation Requirements

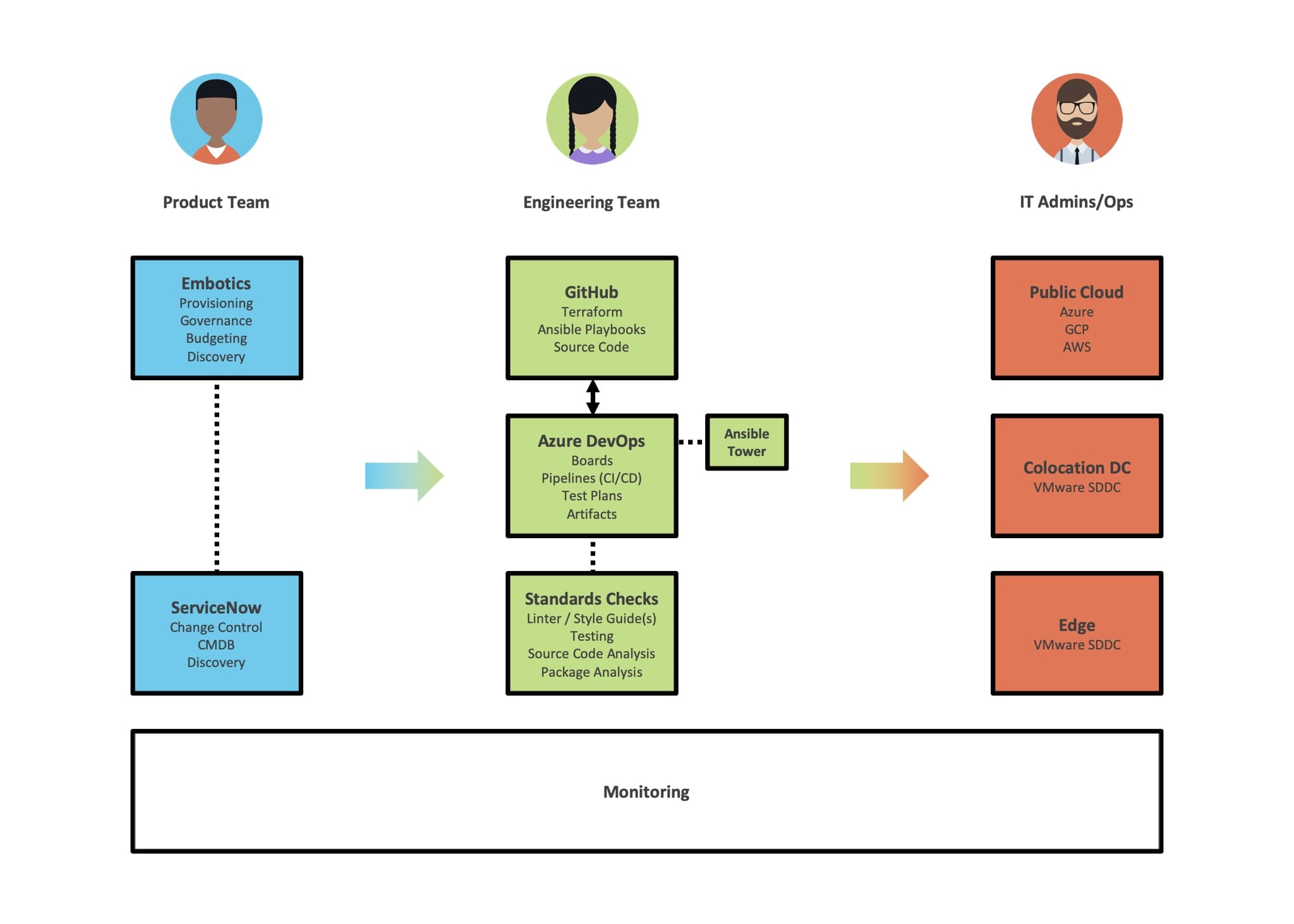

The word “automation” covers a very wide scope, therefore, with the previously defined business characteristics in mind, I have created the following requirements, starting with three personas:

-

Product Team: IT Users and/or Business Partners. Assume basic IT skills.

-

Engineering Team: IT Users and/or Service Integrators. Assume moderate/advance IT and software development skills.

-

IT Admins/Operations: IT Users and/or Service Integrators. Assume moderate/advance IT and software development skills.

These personas would interact with the following automated capabilities, connecting back to the previously stated value proposition.

-

Provisioning: The ability to create, modify and provision low-level (e.g. Server) and high-level (e.g. DNS) infrastructure, as well as software services.

-

Governance: The ability to create, modify and maintain groups that control the user’s ability to provision, modify and remove infrastructure and services.

-

Budgeting: The ability to proactively understand provisioning costs, as well as track the spend over time (based on defined groups).

-

Discovery: The ability to identify, track and manage all provisioned infrastructure and services, including the creation and maintenance of CMDB Configuration Items.

-

Change Control: The ability to track and manage change across all provisioned infrastructure and services.

-

Operations: The ability to trigger housekeeping and maintenance activities, based on pre-defined criteria/events and/or predictive insights.

-

Testing: The ability to proactively trigger pre-defined tests, covering Unit, Module, Integration, System and UI.

-

Standards Checks: The ability to proactively trigger pre-defined security, quality and privacy checks.

-

Documentation: The ability to generate and maintain quality (installation/configuration/change) documentation.

To achieve this outcome, new concepts, techniques and technologies would need to be implemented, including a paradigm shift to Immutable Infrastructure.

Immutable Infrastructure

In a traditional “mutable” infrastructure, services can be modified post-deployment, usually via a manual process. For example, a developer and/or administrator would log in to a server via RDP/SSH and complete a set of manual steps to modify configuration, packages, etc.

Immutable infrastructure is a new infrastructure paradigm, where services cannot be modified post-deployment. If a modification is required, a new service is built from a common image, which inherently includes the required changes. This is only possible, if a modern application architecture is followed, for example, The Twelve-Factor App methodology.

The “Pets vs. Cattle” analogy is often used to describe this paradigm shift, where historically services would be treated like pets (one of kind, long life, managed by hand). Whereas modern (immutable) services would be treated like cattle (one of many, shorter life, managed at scale).

To support the previously defined value proposition, I would prioritise immutable infrastructure, which inherently promotes the use of automation, enabled via technologies such as Infrastructure-as-Code (IaC).

Infrastructure-as-Code

Infrastructure-as-Code (IaC) is about writing code that describes the infrastructure, similar to writing a program in a high-level language. Once defined as code, infrastructure can be managed as if it were software, where resources can be created, removed, replaced, resized and migrated, leveraging automation.

Building upon my Hybrid Multi-Cloud strategy, I would position Terraform and Ansible as part of my proposed automation architecture.

Terraform is a cloud-agnostic Infrastructure-as-Code capability owned by HashiCorp, written in Go using HashiCorp Config Language (HCL). It includes an open-source command-line tool, which can be used to provision (low-level and high-level) infrastructure to multiple hosting environments, including Azure, GCP, AWS, as well as VMWare.

Ansible is an open-source Configuration Management, Deployment and Orchestration tool owned by Red Hat. Ansible leverages Playbooks (YAML files), to execute specific tasks, often focused on server configuration. Similar to Terraform, Ansible is agent-less, supporting a wide range of platforms and hosting environments.

In my proposed architecture, Terraform would be positioned to provision infrastructure, delegating configuration tasks to Ansible. The short video below from VMware provides a good overview as to how/why Terraform and Ansible complement each other.

These two technologies not only adhere to my IT principles (open-source, decoupled, API-first) but also help to enable my Hybrid Multi-Cloud strategy, meeting the requirement to deploy to multiple hosting environments (Public Cloud, Colocation DC and Edge Computing).

Finally, it should be noted, as with any technology Terraform and Ansible have their own set of challenges (e.g. Timely Updates, State Management, Secret Management, etc.) With this in mind, the use of cloud provider-specific Infrastructure-as-Code (e.g. Microsoft ARM Templates would be positioned to mitigate any gaps.

Automation Architecture

Alongside Terraform and Ansible, I would position the following technologies to support the wider automation requirements.

-

Embotics: SaaS-based Cloud Management Platform (CMP), which supports provisioning (via Terraform), governance (group management and change control), budgeting (proactive cost analysis and chargeback), as well as discovery (CI creation).

-

GitHub: SaaS-based source code management, providing distributed version control for Terraform, Ansible Playbooks and application source code.

-

Azure DevOps: Maximising the return on investment with Microsoft, Azure DevOps provides a suite of SaaS-based services covering CI/CD capabilities, as well as test tooling and package management.

-

ServiceNow: SaaS-based IT Service Management (ITSM), providing change control and configuration management database (CMDB) capabilities.

-

Monitoring: A suite of tools (e.g. Azure, VMware, Application Performance Management), which monitor the end-to-end infrastructure, applications and data, with the ability to predict and proactively trigger maintenance activities.

The decision to position a Cloud Management Platform (CMP) was driven by the desire to proactively manage governance and budgeting, providing enterprise-level visibility and control. The CMP would also enable autonomy for non-technical personas (e.g. Product Teams) by provisioning infrastructure and services to any hosting environment via the simplified service catalogue. However, behind the scenes, Terraform and Ansible would be doing the heavy lifting, ensuring that the CMP remains loosely coupled, enabling both “Cloud Direct” and “Cloud Brokered” provisioning approaches.

The diagram below highlights how these technologies (alongside Terraform and Ansible) complement each other to enable my proposed automation architecture.

As highlighted in the diagram, the Product Teams primary engagement would be via “user-centric” services, such as Embotics and ServiceNow. Embotics provides a service catalogue for standard services (e.g. Servers, Databases, Web Apps), covering all hosting environments (Public Cloud, Colocation Data Centres and Edge Computing). Where required, ServiceNow would manage all change control, including approvals.

The engineering team would primarily work within GitHub, following the GitFlow branching model. As code is pushed to GitHub, automated tasks would be executed via Azure DevOps following a standard CI/CD practice. These tasks could cover a wide range of capabilities, including linters (e.g. ESLint), testing (e.g. Mocha), security analysis (e.g. Checkmarx), package analysis (e.g. Snyk) and document generation (e.g. Pandoc).

Finally, IT admins and operations would support the hosting environments, leveraging native services (e.g. Azure Portal, VMware vSphere) and tooling (e.g. Azure PowerShell, CLI).

Conclusion

In conclusion, my automation strategy aims to facilitate a wider paradigm shift, where infrastructure would be defined and maintained as software. I believe this approach would unlock tremendous business value, specifically speed, agility, flexibility and cost optimisation, as well as promoting innovation.